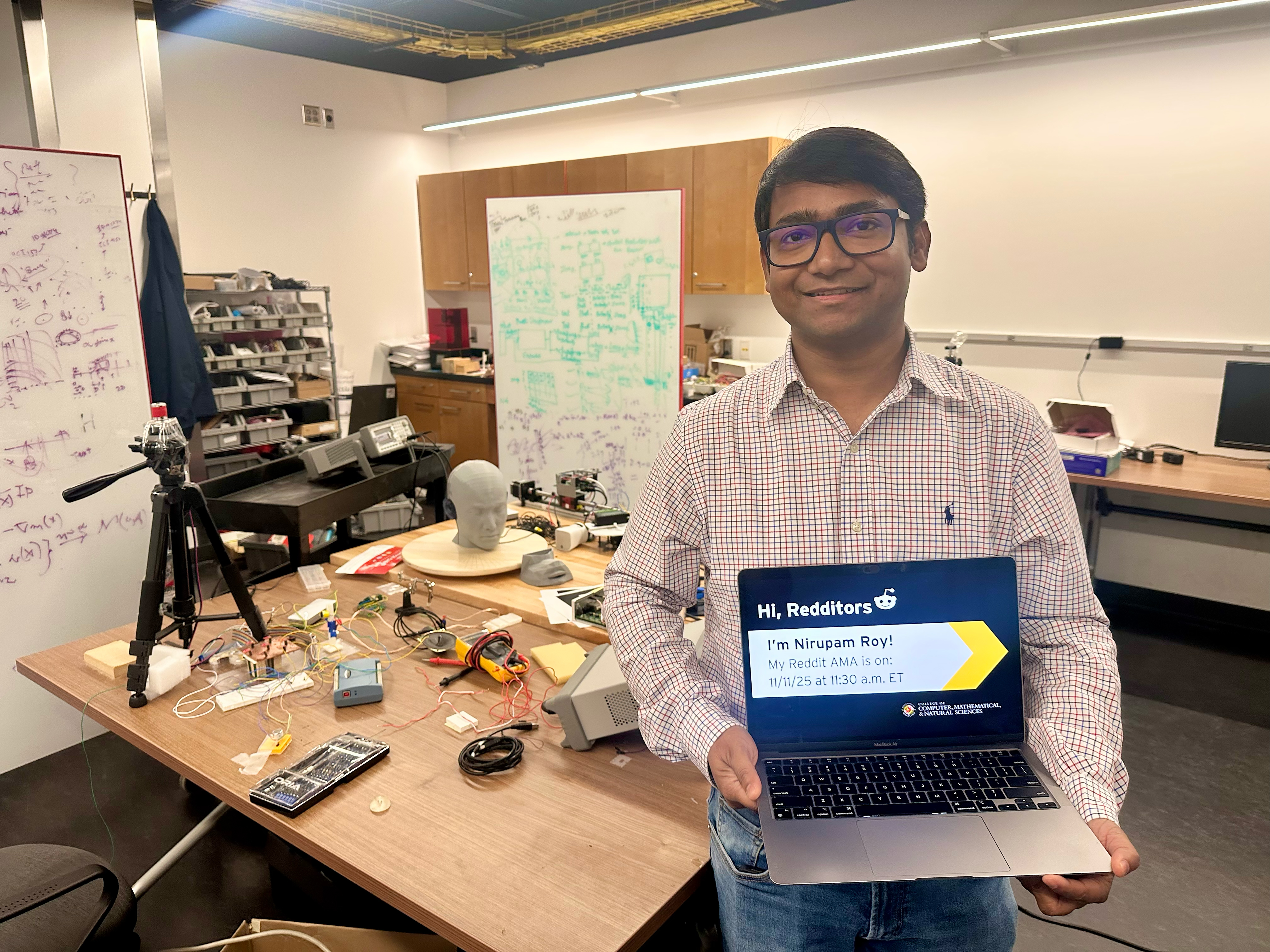

Computer Scientist Nirupam Roy Answers Questions About Deepfakes

The College of Computer, Mathematical, and Natural Sciences hosted a Reddit Ask-Me-Anything spotlighting secure machine hearing research.

University of Maryland Computer Science Associate Professor Nirupam Roy participated in an Ask-Me-Anything (AMA) user-led discussion on Reddit to answer questions about the mathematics of infectious diseases on November 11, 2025.

Roy's research explores how machines can sense, interpret, and reason about the physical world by integrating acoustics, wireless signals, and embedded AI. His work bridges physical sensing and semantic understanding, with recognized contributions across intelligence acoustics, embedded-AI, and multimodal perception.

Aritrik Ghosh and Harshvardhan Takawale, computer science Ph.D. students in Roy’s group, joined him to answer questions. This Reddit AMA has been edited for length and clarity.

What do you think about digitally signing videos or images?

(Roy) One of the technologies to prevent deepfakes is to install metadata right from the device that is capturing it, and this has been used in many devices. It would work in a majority of the cases. Sometimes the metadata does not work if it does not include or contain the semantics of the content (image/video). This metadata-based prevention system requires the device to cooperate and follow a standard, but sometimes it is difficult to achieve if we are thinking of a diverse type of device that can take pictures/images.

Another example is the Coalition for Content Provenance and Authenticity, which tracks the time when the image was taken and if it has been edited after that. If we can establish the timeline, this can help us establish authenticity.

(Takawale) In a sense, the software-only solutions like Public Key are not foolproof. A hardware-software-based solution is a better alternative.

How do we make guarantees about AI safety in the face of undecidability?

(Roy) Like any profound technology, AI has created many possibilities for advancement. Again, like all profound technologies, it can be used in adversarial ways. Research is evolving to safeguard against such abuses of this technology. Industry is implementing guardrails against misuse as well. At the same time, we should also make people aware and prepared for this new space. Apart from our research, we also spend time on education. In one of our recent efforts called Cyber-Ninja, a gamified agentic AI platform that teaches teenagers about social engineering attacks, AI exploitations and online threats.

What's going to stop people from creating a completely new world that you see through your VR goggles, and you don’t even know it’s not real? Is that possible?

(Roy) We always see reality through our own perceptions, biases, likes and dislikes. Some technology may make this need a bit obvious, but I believe that, at the end of the day, it is a projection of our own perception. We have the technology to choose which newspapers we read or conferences we attend based on our own biases. It reflects our own structure of mind and confirmation bias. Technology cannot operate without our intentions.

How far are we from deepfake video that is indistinguishable from reality? Is there work being done to have a system that authenticates real video and gives it a stamp of approval? Are we destined for a perpetual arms race between deepfakers and authenticators?

(Roy) Images and videos are not reality. They are representations of reality, and our perception/trust in that system not only depends on the picture itself but also various other factors—the context of those images, our internal bias, our urgency to reach conclusions, etc. There are other factors that can also lead to our perception. For example, 10 years ago, 10-kilobyte images could be considered high quality, but now, we question even several megabytes of image data. That's another reason it is hard to unequivocally label something as fake or real. Sometimes, we can only label whether the image has been altered from its original creation.

We can give a stamp of approval for any malicious edits, but at the expense of additional information added to the image in terms of metadata, some novel encryption technique to include semantic information about the image, and so on.

To answer the arms race question, we need to first understand that the deepfake or authentication is not different in technology—rather, it's different in our intentions to use those technologies. As long as our intentions conflict, we will forever be using technology to serve those purposes, which can be interpreted as an arms race between intentions. I don't necessarily see it as an arms race between technologies.

With regard to machine hearing, are you or any other teams you know of working on real-time audio stream processing, say for hearing aids?

(Roy) Hearing aids are a special scenario. Deepfake prevention is not necessarily required for these kinds of personal devices. If the manufacturing and distribution process can be controlled, which is often done by the distributor, then the authentic operations of those devices can be guaranteed. Unlike generic issues with recording, publishing and eavesdropping of audio data, the audio stream generated by hearing aids is fairly secure.

That said, securing real-time audio data (and real-time translation services) is still an active research area. One of our recent research papers (VoiceSecure) also explored a solution in this field. You can read more about VoiceSecure here.

In fact, one of our lab's upcoming business ventures will address this exact issue. Please stay tuned on our lab website!

How viable is it to train a joint embedding space for authentic audio-visual pairs that penalizes synthetic co-articulation artifacts, and can self-supervised contrastive learning remain robust as generative diffusion models improve temporal alignment?

(Roy and Ghosh) The so-called synthetic artifacts are becoming indistinguishable from advanced AI systems with better lip syncs and natural-sounding audio. So I do not rely too much on the gap between human perception and the limitations of generative AI technologies. Rather, a more feasible option would be to build on provenance, metainformation and encryption-based techniques.

How do you design defenses against deepfakes that remain effective in the long term? Won't the exponential escalation of AI capacity outpace our human attempts to combat AI-enabled human rights violations?

(Roy) I have an interesting observation about human trust in publicly available content. I remember my grandmother used to believe everything that came in typed/printed format (like a newspaper). While society has moved away from that notion of trust, many still believe video recording of an incident to be real. Although recent deepfakes are pushing us away from that notion of trust, I am optimistic that our society will naturally restructure this norm. Evolving defense technologies will also play a role in this future. We are simply in the transition phase.

Will deepfakes soon get to the level that they can easily bypass "anti-deepfake" techniques?

(Roy) This kind of solution falls under the category of challenge-response solutions, where, for example, the system generates a challenge to move the hand in a specific way, and if the user can do it, it proves that the user is in front of the camera. But note that it might not be too hard for a resourceful attacker to develop a system that can use language models to understand the challenge and generate fake content to match the challenge. I still put my trust in prior information and encryption-based systems to fight against this.

Is there a relationship between the technology used to create auto-generated content—such as LLMs, image and video generators, and so on—and the technology used to detect those?

(Roy) There are some relationships between them. For instance, some of the generative techniques attempt to reduce the error between their output and the real contents. A family of detection techniques can rely on this error to detect fake content. However, the available generative techniques and detection measures are too diverse to have any necessary correlation between them.