Creating a Virtual World that’s a Feast for the Eyes…and Ears

Computer Science Assistant Professor Ruohan Gao and Distinguished University Professor Ming Lin turned a single image into an explorable world that sounds like the real thing.

Ruohan Gao didn’t set out to study sound. He began his work in computer vision, drawn to what he calls the most “obvious” way humans understand the world: by sight.

“Vision is so fundamental for many creatures, certainly for humans,” he said. “Think of how much vision has shaped the evolution of living things!”

But he knew that perspective was incomplete.

“Of course, when we interact with the world, we also listen, we also touch, we also feel,” he said.

When Gao joined the University of Maryland in 2025 as an assistant professor in the Department of Computer Science, that broader thinking pushed his research toward the multisensory dimensions of artificial intelligence (AI) and what he calls Multisensory Machine Intelligence—an approach that reflects people’s true multi-layered experience.

“We want to mimic—and maybe enhance—how humans hear, see and feel the world,” he said, “by building intelligent machines that have those capacities in a multisensory virtual space.”

Filling the silence

That’s where SonoWorld enters the picture.

SonoWorld is an explorable virtual landscape that fills a crucial gap in the virtual experience. While recent advances have made it possible to generate immersive 3D environments from single images, “all these frameworks have one missing ingredient,” he said. “They are silent.”

But sound is inseparable from how most of us experience the world.

“We are living in 3D, and we don’t just see what’s around us—we hear it,” he said. “We hear things we can’t see, too. I can hear you talking to me, but I can also hear birds chirping outside. I can hear the hum of the air conditioner in the building. Sound is everywhere.”

SonoWorld turns a single image into a fully immersive 3D audiovisual scene, allowing the user to “walk” deep into the image and hear what’s going on throughout.

“Our goal was to add a spatial sound field that is aligned with the visual content,” Gao explained, which means sound doesn’t just exist in the scene—it behaves like it would in real life.

“If there is a violin on your left, you should hear it from the left,” he said. “If you walk toward a waterfall, the sound should get louder.”

Achieving such complexity requires the system to do more than recognize objects. It has to infer the noises they make and the way those sounds change in different contexts.

“As a computational task, that’s very challenging,” he said.

But teamwork is powerful, noted Gao’s collaborator, Distinguished University Professor of Computer Science Ming Lin, who brought her decades of experience and expertise in computer animation, geometric modeling and virtual reality to help improve both the perceptual and physical realism of the final framework. Also on the team, UMD computer science Ph.D. students Derong Jin and Xiyi Chen, who played key roles in developing the method and co-authored the SonoWorld paper with Gao and Lin. The paper will be presented at the IEEE/Computer Vision Foundation Conference on Computer Vision and Pattern Recognition in June.

“We were able to do this project because of joint interest from the audio reconstruction side and the visual reconstruction side,” said Lin, who has joint appointments in the University of Maryland Institute for Systems Research, the Department of Electrical and Computer Engineering, and the Maryland Robotics Center and holds the Dr. Barry L. Mersky and Capital One Professorships in the Department of Computer Science at UMD.

“As far as I know, this is the first paper that reconstructs both audio and visual from a single image,” she said.

Sound stages

The process unfolds in several stages. First, the system expands the original image into a 360-degree view.

“We generate a 360 panorama to capture the whole scene,” Gao explained.

Next, the system uses AI foundation models to identify and map the locations of objects within that space—things like trees, rivers and buildings. The SonoWorld system then predicts which of those objects produce sound and what they should sound like.

“There are different types of sound sources,” Gao said. “Birds are a type of point source. A river is more like an area source. And then there are ambient sounds, like insects or wind. Each type requires different treatment in our program.”

Finally, the system generates spatial audio that matches the 3D environment.

“The sounds have to be attached to plausible locations in the 3D world,” he said, so that as a user moves through the scene, both the visuals and the audio shift naturally together.

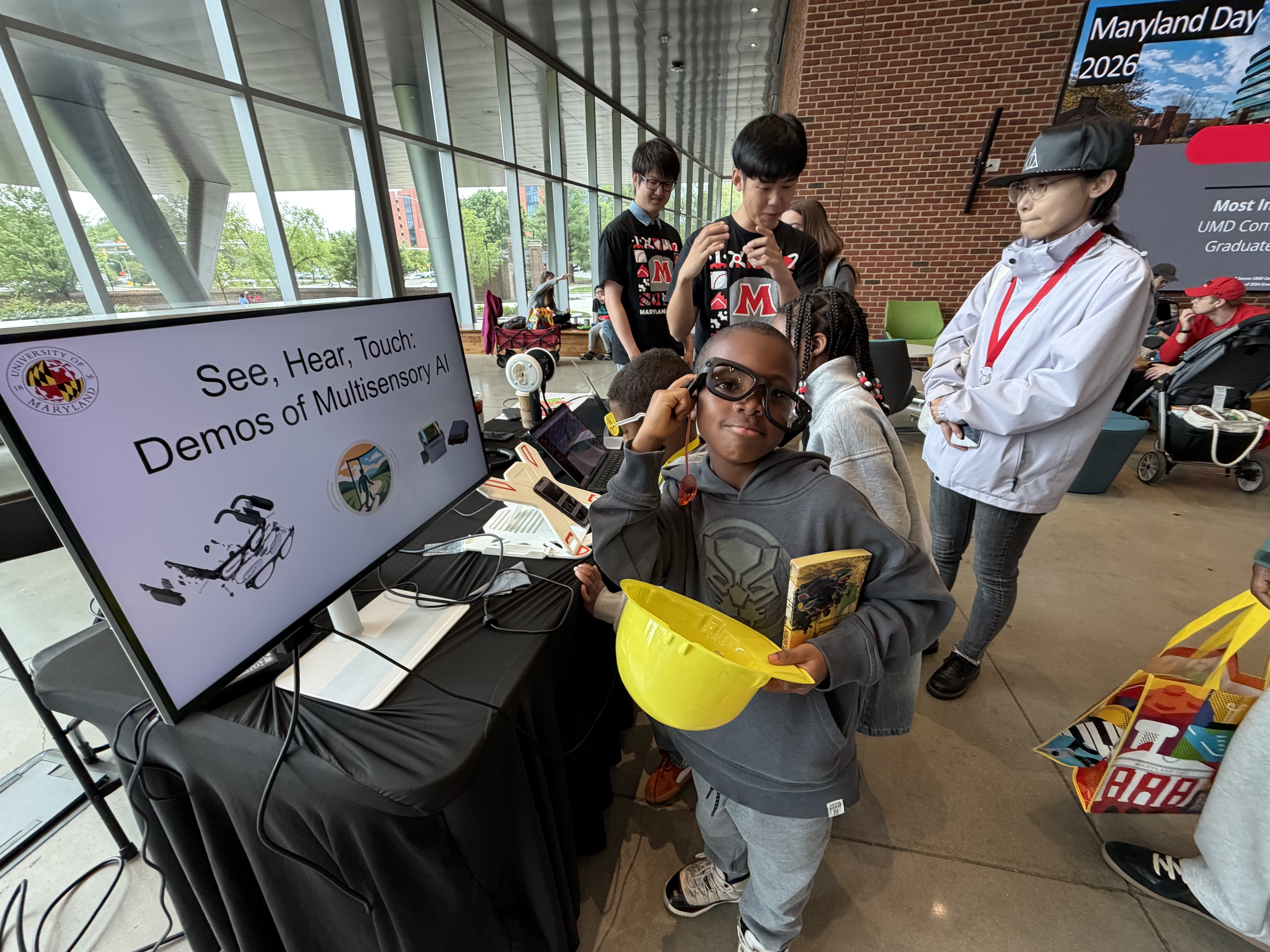

The result, which the team demonstrated at Maryland Day 2026, is what Gao calls “an environment that can be explored visually and acoustically at the same time. SonoWorld is a world with built-in sensory layers, like the real thing.”

A more seamless (virtual) world

While the technology is still emerging, Gao sees immediate potential in a variety of entertainment experiences.

“For content creation, this is very useful,” he said. “Right now, you often have separate pipelines—one for visuals, one for sound. In the future, you could generate both together from a single image,” which could reshape workflows in filmmaking, gaming and digital media, making it easier and more efficient to create immersive experiences.

Gao also sees broader possibilities, like virtual and augmented reality, where sound plays a critical role in making environments feel real. Lin noted that the system may also be applicable to design.

“If an auditorium isn’t designed correctly for audio quality, a musical or other experience will be very different,” she explained. “For a designer to use this technology to make design choices is very powerful.”

Gao also sees long-term applications in robotics, where multisensory data could help machines better understand and interact with their surroundings, for example, in fine-grained, dexterous manipulation of objects.

Beyond any single application, Gao sees SonoWorld as part of a larger shift in AI.

“There’s a concept called spatial intelligence,” he said, referring to the ability of machines to understand the 3D world. “But I believe it should be multisensory spatial intelligence.”

In other words, a true understanding of the world can’t come from vision alone.

Layers to come

“There is still a lot to do,” Gao noted.

Current virtual environments are largely static, and one of the next challenges is adding motion—moving from 3D to what he calls “4D,” where both objects and sounds change over time.

“Life certainly doesn’t sit still,” he said. “Cars pass by, people move, sounds change.”

Plus, life is tactile, and touch is another sense the team hopes to program in. The team is also working on reconstructing and exploring worlds inside videos.

“It’s a big step because video includes multiple perspectives and a temporal component not present in a single image,” Lin said. “But we expect to be able to capture dynamic, moving objects and changing scenes very soon.”

The collaborative effort that led to SonoWorld’s release reflects the broader nature of the challenge itself. Multisensory intelligence sits at the intersection of multiple fields, and Gao believes the future of AI will depend on bringing those areas together.

“We should have a unified way to represent and understand this multisensory world,” he said, “instead of treating each modality separately.”

Because in the end, the goal is not just to build better models, but to build virtual systems that experience life in all its vibrance, using all the senses—just like we do in the real world.